I turned on airplane mode.

No internet. No cloud. No server anywhere.

Just my iPhone 17 Pro, a 3.06GB model sitting entirely on my device, and a question about Narendra Modi.

The response came back in under three seconds.

Some facts were right. Some were hallucinations. But honestly? That is not the point.

The point is that a 4 billion parameter large language model ran completely offline on a smartphone and gave me coherent, usable answers in seconds.

No API call. No data sent anywhere. Just the model, the chip, and the question.

That is something.

How I Got Here

I have been watching the on-device AI space for a while now.

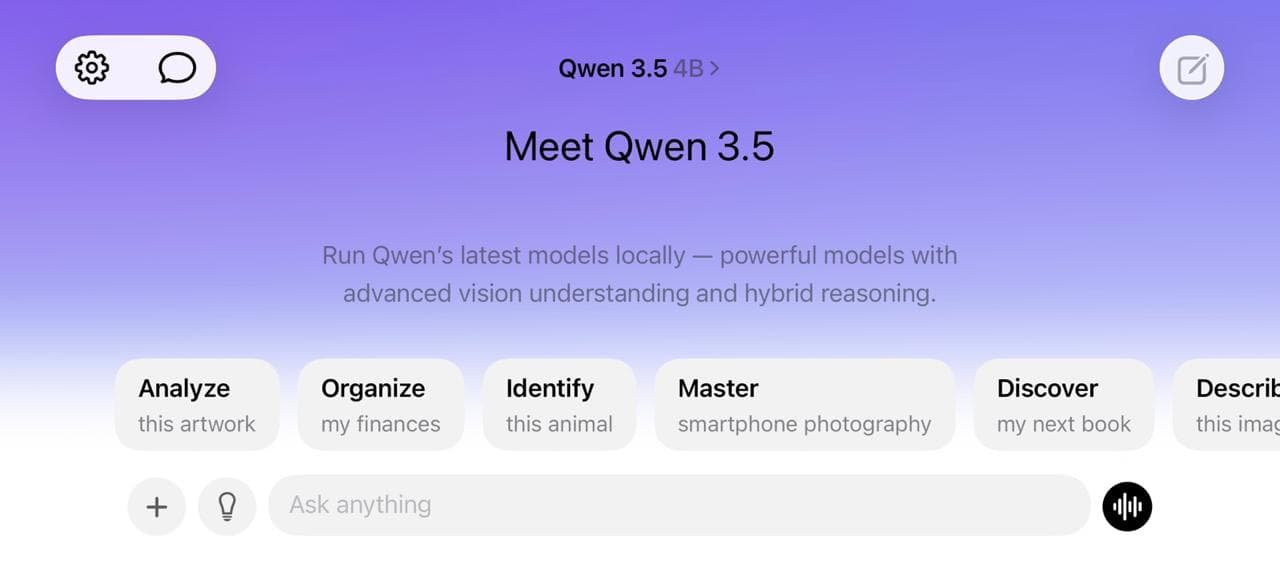

And the buzz around Qwen 3.5 4B on X was impossible to ignore. People were talking about its architecture like it was a genuine leap forward, not just another model release.

So I opened an app called Locally AI, downloaded the 4B model, and started testing.

Here is what the app gives you in terms of model options:

- 0.8B model: 1.03GB, works on iPhone 14 and newer

- 2B model: a solid middle ground for older hardware

- 4B model: 3.06GB, recommended for iPhone 15 Pro and newer

- 9B model: for when you really want to push it

I went with 4B. It hits the sweet spot between capable and practical. And since I'm on an iPhone 17 Pro, it ran without breaking a sweat.

The app also has a solid library of other models including Apple Foundation, LFM 2.5, LFM 2, Ministral 3, SmolLM 3, Gemma 3n, Gemma 2, Granite 4.0, Llama 3.2, and a bunch of legacy models.

But after everything I'd read about Qwen 3.5's architecture, I had to start there.

What Makes Qwen 3.5 4B Different

Here is what most people skimming the headlines are missing.

This is not a compressed version of a bigger model. Most small LLMs are just distilled giants. Qwen 3.5 was designed from scratch to scale down efficiently while keeping multimodal understanding, long context handling, and agent-style reasoning intact.

The architecture combines Gated Delta Networks with sparse Mixture-of-Experts. It alternates between linear attention and full attention in a 3:1 ratio. That matters because standard transformers scale quadratically with context length, meaning double the context means four times the memory.

Qwen 3.5 breaks that curve.

Here is where KV Cache comes in. Every time a language model processes text, it computes attention scores between tokens. Without caching, it recomputes everything from scratch at every step. That is brutal on memory and speed. KV Cache stores those computed key and value pairs so the model only processes new tokens, not the entire history repeatedly.

Qwen 3.5's architecture compresses that context into fixed-size states, dramatically cutting KV cache memory requirements. That is how a 4 billion parameter model fits inside 3GB and still responds fast on a phone chip.

It is engineered to be fast and memory-efficient by design. Not as an afterthought.

What the Test Actually Felt Like

I typed my question about Narendra Modi.

Three seconds. Full paragraphs back.

Some facts accurate. Some confidently wrong. But coherent, structured, and genuinely readable.

Let me be clear about something. I was not testing this expecting perfection. I was watching a 4 billion parameter model answer a question about a world leader, on a phone, with zero network connection, in three seconds.

That is the bar we have crossed. And we crossed it quietly.

This is Just the Beginning

Think about what on-device AI actually unlocks when it gets good.

- A doctor running a diagnostic assistant in a clinic with no internet.

- A journalist working offline in a country with restricted connectivity.

- A developer building tools that process sensitive data without it ever leaving the device.

We are not there yet. A 4B model with hallucinations is not replacing cloud AI tomorrow.

But the trajectory is clear.

The pace of improvement in on-device models over the last 18 months has been extraordinary. And the fact that you can install a legitimate LLM on your iPhone in a few taps, flip on airplane mode, and get real answers to complex questions tells you everything about where this is going.

The edge AI era is not coming.

It has already started.

If you have not tried running a local model on your phone yet, download Locally AI and spend 20 minutes with it. I promise you will feel the same thing I felt.

Something that felt futuristic is already in your pocket. We are just at the very beginning of figuring out what to do with it.

What would you build if your users' AI ran entirely on their own device?